Protecting Intellectual Property in the Age of Large Language Models

PrivateDocsAI Team

In the competitive landscape of 2026, a company’s value is increasingly tied to its proprietary data: its "Intellectual Property" (IP). Whether it’s a law firm’s unique litigation strategy, a financial firm’s proprietary analysis models, or a tech company’s trade secrets, this data is the lifeblood of the organization.

However, the rapid adoption of Generative AI has created a paradox. To stay competitive, employees must use Large Language Models (LLMs) to process information faster. But every time an employee "chats" with a confidential document using a cloud-based AI, they are effectively leaking that IP outside the corporate perimeter.

For CISOs and IT Directors, the challenge is clear: How do you provide the power of GenAI without turning your corporate secrets into public training data? The answer lies in shifting away from cloud dependencies and adopting an offline enterprise AI that brings the model to the data.

For those in highly regulated sectors, the search for a ChatGPT enterprise alternative for law firms and IP-heavy industries has led to one conclusion: true security requires a Zero-Trust AI strategy.

The LLM Data Leak: How Your IP Escapes

The primary risk of cloud-based AI isn't just a "hack" in the traditional sense. It is the architectural reality of how cloud LLMs function. When you use a public or even some "enterprise" cloud AI tools, your IP undergoes a journey that bypasses your security perimeter:

- The Prompt Leak: Highly sensitive queries are sent to a remote server. Even with "no-training" clauses, the data exists in a decrypted state in a third-party environment.

- The Shadow AI Problem: Employees, seeking efficiency, often use personal accounts to summarize 500-page PDFs, bypassing SOC2 or GDPR controls entirely.

- The Feedback Loop: In standard cloud environments, there is always a risk of "Data Poisoning" or inadvertent leakage where fragments of one user’s sensitive data might influence model responses for another.

To protect IP, businesses must adopt data privacy AI tools that function entirely offline.

Pillar 1: Reclaiming the Perimeter with Private RAG Architecture

The gold standard for document intelligence is Retrieval-Augmented Generation (RAG). It allows an AI to provide answers based specifically on your corporate documents. However, "Cloud RAG" requires you to index your IP in a remote vector database.

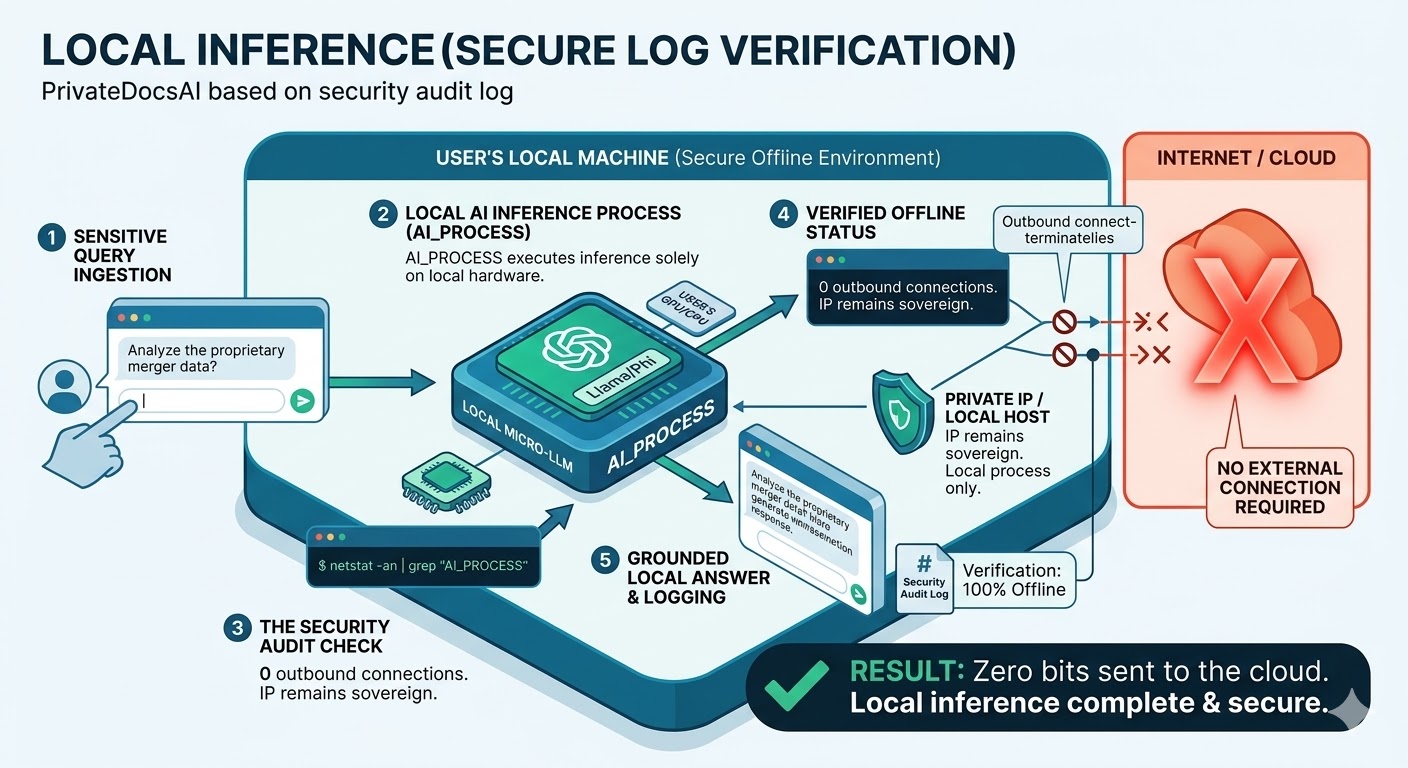

PrivateDocsAI solves this by implementing a Private RAG architecture. This means the entire intelligence cycle—from the moment a PDF is ingested to the final answer—happens on your own hardware.

On-Device Processing: The Technical Reality

- Local Embedding: PrivateDocsAI uses local models like

bge-m3to turn your IP into mathematical vectors. - Local Vector Storage: These vectors are stored in

ChromaDBon your local SSD, not a cloud server. - Local Inference: Micro-LLMs (Qwen, Phi, Llama) run on your CPU/GPU to generate insights without an internet connection.

Pillar 2: Micro-LLMs and Local Performance

A common misconception in 2026 is that you need a "Giant" cloud model to handle enterprise tasks. The reality is that for specialized tasks—such as summarizing legal briefs or extracting data from complex financial tables—Local LLMs for business are just as effective, if not more so.

Because PrivateDocsAI uses optimized Micro-LLMs, the processing is incredibly fast. There is no latency from cloud round-trips. Furthermore, PrivateDocsAI is hardware agnostic. It auto-scales its performance to run on everything from a standard business laptop to a high-end workstation, ensuring that every employee has access to a secure document AI without a massive hardware overhaul.

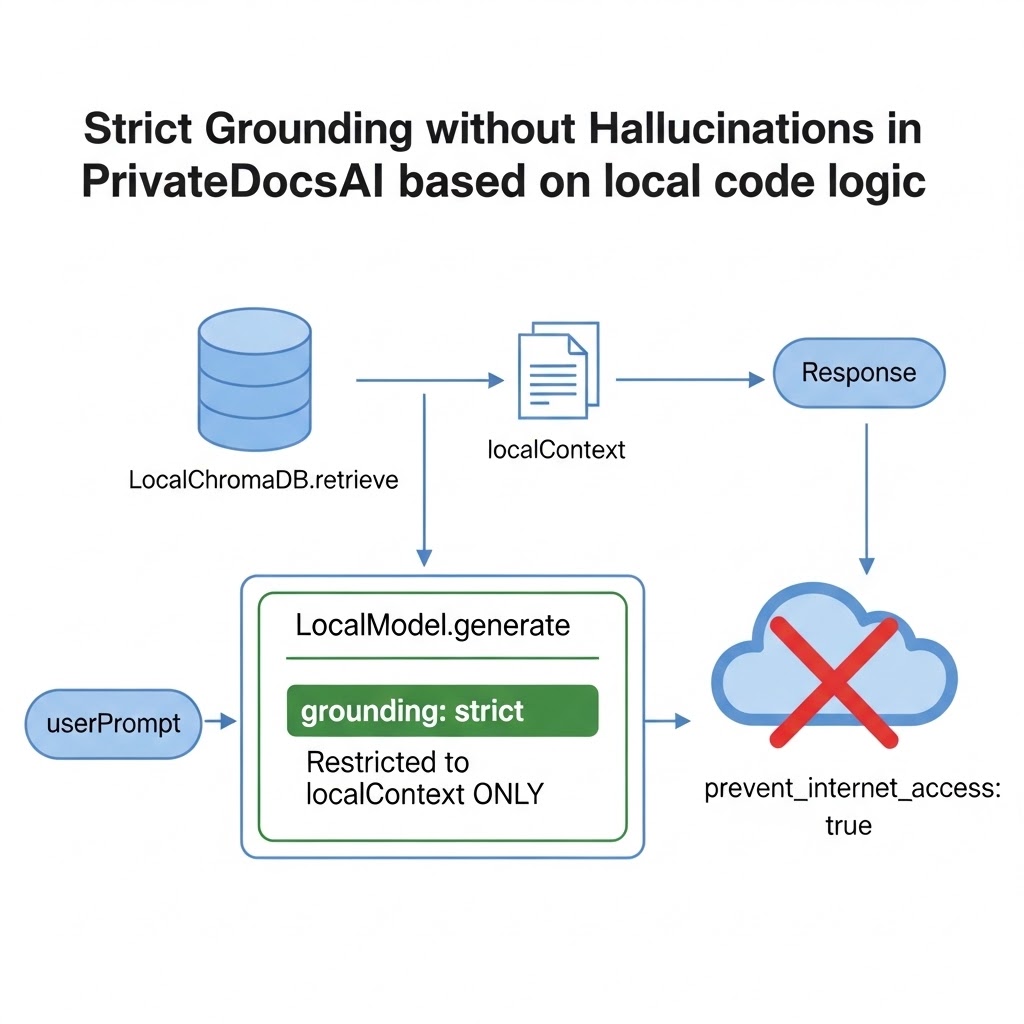

Pillar 3: Strict Grounding – The End of Hallucinations

When dealing with Intellectual Property, accuracy is non-negotiable. One of the greatest risks of public AI is "hallucination," where the model makes up information based on its general training data.

PrivateDocsAI implements Strict Grounding. The AI is hardcoded to answer only using the documents you have uploaded. If a lawyer asks about a specific clause in a contract, the AI will cite the exact page and paragraph in the local file. If the information isn't there, it simply says so. This eliminates the risk of an AI generating "legal fictions" or "financial fantasies" that could lead to liability.

Use Case: Legal and Financial IP Protection

For Law Firms

Lawyers are the custodians of their clients' most sensitive IP. Using a public LLM for discovery or trial prep is often a breach of ethics. PrivateDocsAI serves as the premier ChatGPT enterprise alternative for law firms, allowing associates to chat with thousands of pages of discovery documents instantly and offline.

For Financial Analysts

Market-moving data and proprietary investment models are high-value targets. By using an offline enterprise AI, analysts can use Smart Table Parsing (advanced local OCR) to extract data from quarterly reports and invoices without that data ever hitting a public API.

Compliance as a Side Effect of Security

When you bring the AI to the data, compliance stops being a hurdle and starts being a side effect of good architecture.

- SOC2 & HIPAA: Since no data leaves your perimeter, your "Trust Services Criteria" scope is significantly reduced.

- GDPR: No data is transferred to third-party processors in "Third Countries," eliminating the need for complex DPAs.

- Intellectual Property: You maintain 100% data sovereignty.

The ROI of Sovereignty

Building a Zero-Trust AI strategy with PrivateDocsAI provides a clear return on investment:

- Zero API Costs: No more per-token billing or cloud subscription "seat taxes."

- Risk Mitigation: Avoiding a single IP leak can save a company millions in legal fees and lost competitive advantage.

- Productivity: Instant Document Chat means no more time wasted manually searching through dense PDFs.

Conclusion: Don't Let Your IP Become the Model's Training Data

The age of the Large Language Model is an age of opportunity, but it is also an age of risk. If you are not in control of your AI's hardware, you are not in control of your Intellectual Property.

By adopting a local-first, offline enterprise AI like PrivateDocsAI, you can provide your team with the world-class tools they need while ensuring your firm's secrets remain a secret. It’s time to rethink the perimeter and bring the AI to the data.

Next steps

Ready to test a truly private AI? Download the PrivateDocs AI desktop app today and start your free 7-day trial. Experience offline, local RAG on your own hardware - no credit card required, and your documents never leave your machine.