Why 'Data-at-Rest' Encryption Isnt Enough for AI Processing

PrivateDocsAI Team

In the landscape of 2026, many enterprise security teams are operating under a dangerous false sense of security. When evaluating Generative AI tools, the first box a CISO often checks is "Encryption at Rest." It sounds definitive. It sounds safe. But in the world of Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG), encryption at rest is merely the bare minimum—it is the baseline, not the finish line.

The fundamental challenge of Generative AI is that data must eventually be unencrypted to be processed. Whether you are using a cloud-based ChatGPT enterprise alternative for law firms or a general-purpose productivity bot, your data must be "read" by the model. If that reading happens in the cloud, your encryption at rest is irrelevant the moment the data enters the provider's memory.

To truly protect corporate intellectual property and maintain compliance with SOC2, HIPAA, and GDPR, organizations must shift toward a Zero-Trust AI strategy that prioritizes data sovereignty through local processing.

The "Data-in-Use" Problem: The AI Security Gap

Traditional data security focuses on two states: Data-at-Rest (sitting on a drive) and Data-in-Transit (moving across the network). For decades, encrypting these two states was sufficient for most B2B SaaS applications.

Generative AI introduces a high-stakes third state: Data-in-Use.

When you query an AI about a sensitive legal contract or a confidential financial forecast, the cloud server must decrypt that information to convert it into "embeddings" or "tokens." During this window, your most valuable assets are vulnerable to:

- Memory Scraping: Potential exploits at the server hardware level.

- Model Inversion: Instances where data persists in the model's latent space.

- Provider Overreach: Debugging logs or "safety monitoring" that captures snippets of unencrypted prompts.

This is why Offline enterprise AI has become the only viable path for firms that handle highly confidential client data. By using PrivateDocsAI, the "Data-in-Use" stays on your physical hardware, processed by your local CPU/GPU.

Building a Zero-Trust AI Strategy in 2026

A Zero-Trust architecture assumes that the network is always hostile. In the context of AI, this means we should never trust a third-party server with unencrypted data. Here is how to architect a compliant, secure environment using a Private RAG architecture.

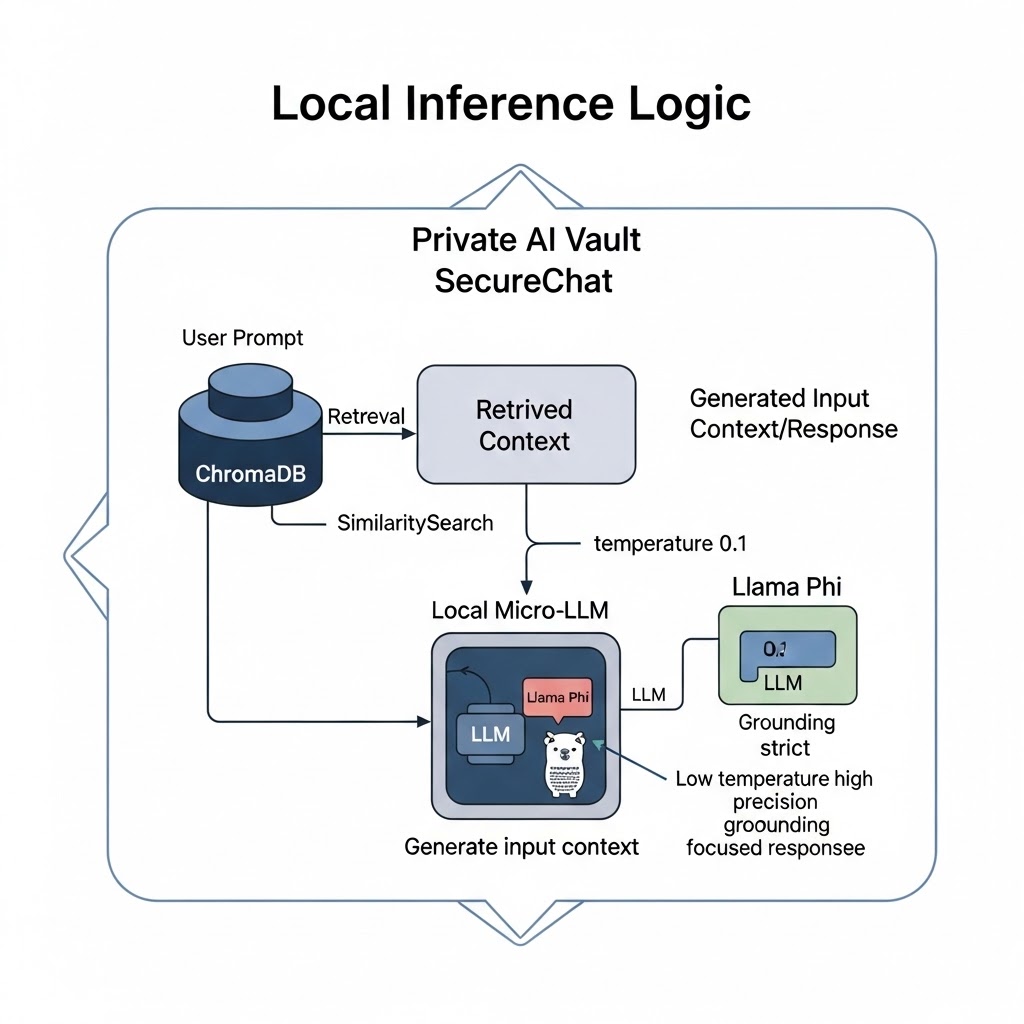

1. Local Vectorization and Embedding

In a typical cloud RAG setup, your documents are sent to a cloud API to be turned into vectors. This is where the first leak occurs. In PrivateDocsAI, we use local embedding models like bge-m3. These models run entirely on the host machine, meaning the mathematical representation of your data never leaves your sight.

2. Micro-LLM Specialization

The myth that you need a trillion-parameter cloud model to summarize a document has been debunked. In 2026, Local LLMs for business like Qwen, Phi, and Llama provide high-fidelity extraction and summarization on-device. These "Micro-LLMs" are faster and more secure because they don't require a round-trip to a remote data center.

3. Strict Grounding and Hallucination Control

A Zero-Trust strategy also requires trust in the output. Public AI models often "hallucinate" by pulling from their general training data. PrivateDocsAI uses Strict Grounding, hardcoding the AI to only answer using the documents you have uploaded locally. This ensures technical accuracy and prevents the model from leaking external, irrelevant information into your workspace.

Why Law Firms are Leading the Move to Local AI

Lawyers are the "Canary in the coal mine" for AI privacy. A lawyer cannot legally or ethically upload client discovery data to a third-party server without risking a breach of attorney-client privilege.

For these professionals, a ChatGPT enterprise alternative for law firms isn't just a preference—it’s a regulatory requirement. PrivateDocsAI allows legal teams to:

- Instant Document Chat: Query thousands of PDFs instantly.

- Smart Table Parsing: Use advanced local OCR to extract data from complex invoices and financial statements without cloud intervention.

- Maintain Sovereignty: Ensure that even if the firm’s internet goes down, their AI assistant remains functional and secure.

The Technical Reality: Hardware Agnostic Performance

A common concern for IT Directors is whether their current hardware can support a secure document AI. The beauty of the 2026 AI ecosystem is the rise of hardware-agnostic software.

PrivateDocsAI auto-scales its performance. Whether an employee is on a standard business laptop or a partner has a high-end workstation, the local AI engine optimizes its memory usage to provide a smooth experience.

Compliance and ROI: A Dual Advantage

Implementing data privacy AI tools that run locally doesn't just satisfy the CISO; it satisfies the CFO.

Risk Mitigation

By keeping AI offline, you bypass the need for complex Data Processing Agreements (DPAs) and reduce the "Shadow AI" risk where employees paste sensitive data into public tools. This drastically simplifies SOC2 and HIPAA audits.

Cost Control

Cloud AI subscriptions often involve unpredictable "per-token" costs and high monthly seats. PrivateDocsAI operates on a Local-Only B2B Subscription. You pay for the software, and you use your existing hardware. This results in a predictable, lower Total Cost of Ownership (TCO) compared to enterprise cloud alternatives.

The Checklist for Your AI Strategy

If you are currently building your firm's AI roadmap for the remainder of 2026, ensure your strategy answers these three questions:

- Where does the decryption happen? If it happens on a server you don't own, you don't have data sovereignty.

- Does it work without an internet connection? If the answer is no, your "Private" AI is likely just a cloud wrapper.

- Is the grounding strict? Can the AI pull in external "hallucinations," or is it locked to your local corporate knowledge base?

Conclusion: Reclaiming the Digital Perimeter

The Generative AI revolution is the greatest productivity multiplier of our time, but it cannot come at the cost of corporate security. "Data-at-rest" encryption is a start, but it is not a complete shield.

To thrive in a regulated environment, businesses must adopt offline enterprise AI that respects the boundaries of the local machine. PrivateDocsAI represents the next generation of this movement—providing all the power of the cloud with none of the risk.

Next steps

Ready to test a truly private AI? Download the PrivateDocs AI desktop app today and start your free 7-day trial. Experience offline, local RAG on your own hardware - no credit card required, and your documents never leave your machine.