How to Build a Zero-Trust AI Strategy in 2026

PrivateDocsAI Team

In 2026, the phrase "Zero Trust" is no longer just a buzzword; it is a prerequisite for corporate survival. As generative AI becomes woven into the fabric of legal research, financial analysis, and HR workflows, the attack surface for sensitive data has expanded exponentially.

The challenge? Traditional security perimeters were built to keep people out. Generative AI, specifically cloud-based models, requires you to invite a third party in—giving them access to your most protected intellectual property just so they can process it.

For the modern CISO, building a Zero-Trust AI strategy means moving away from "trusted vendors" and moving toward "verifiable architecture."

The Core Conflict: AI Utility vs. Zero-Trust Principles

Zero-Trust is based on a simple maxim: Never trust, always verify. When an employee uses a standard cloud-based LLM, you are violating every pillar of Zero-Trust. You are trusting the transit layer (the internet), the processing layer (the AI vendor's servers), and the storage layer (their database).

For law firms and financial institutions, this "trust chain" is a liability. To build a true ChatGPT enterprise alternative for law firms, you must eliminate the chain entirely. This is achieved through offline enterprise AI.

Pillar 1: Data Sovereignty Through Local Processing

The first step in a Zero-Trust AI strategy is ensuring that data never leaves the hardware it resides on. In 2026, the most effective data privacy AI tools are those that operate on the edge.

By deploying PrivateDocsAI, organizations can process massive volumes of sensitive documents—from litigation bundles to quarterly tax filings—entirely on the local CPU or GPU.

Why Local Micro-LLMs are the Answer

We’ve moved past the era where "bigger is better." For enterprise tasks like document summarization and smart data extraction, Local Micro-LLMs (such as Qwen, Phi, and Llama) provide the same—and often better—accuracy than massive cloud models, without the security overhead.

Pillar 2: Implementing Private RAG Architecture

Retrieval-Augmented Generation (RAG) is the gold standard for chatting with corporate documents. However, "Cloud RAG" still requires uploading your internal knowledge base to a third-party vector database.

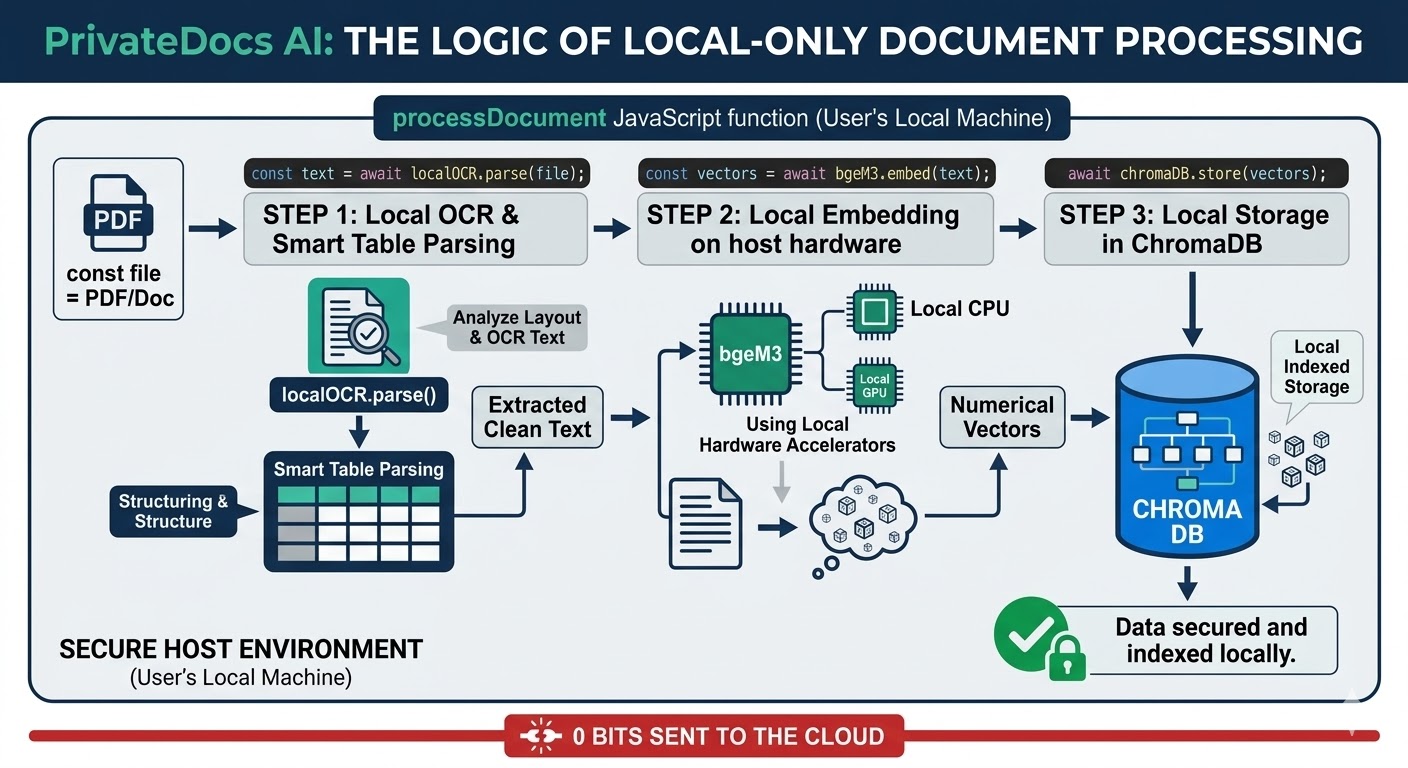

A Private RAG architecture keeps the vector database (like ChromaDB) and the embedding models (like bge-m3) on your own host machine.

The "Strict Grounding" Requirement

In a Zero-Trust framework, you cannot allow the AI to "hallucinate" or pull information from the public internet. PrivateDocsAI is hardcoded with strict grounding. This means the AI engine is physically limited to answering queries based only on the local files you have provided.

Pillar 3: Eliminating Shadow AI and Compliance Gaps

The biggest threat to a Zero-Trust environment isn't an external hacker—it's an associate lawyer or HR executive trying to be productive. If you don't provide a secure document AI, they will find a public one.

This leads to "Shadow AI," which causes:

- SOC2/HIPAA Audit Failures: Unauthorized data processing.

- PII Leaks: Sensitive employee or client data entering public training sets.

- Loss of IP: Proprietary legal strategies becoming part of a cloud model's latent space.

A local, hardware-agnostic solution ensures that whether your team is on a standard business laptop or a high-end workstation, they have the tools they need within your security perimeter.

Pillar 4: The ROI of Local Infrastructure

Beyond security, a Zero-Trust strategy built on PrivateDocsAI is a financial win. Enterprise cloud AI models charge per seat and per token. This creates a "tax on productivity."

By shifting to a local LLM for business, you capitalize on the hardware you already own. There are no API fees, no data egress costs, and no surprise "usage limit" invoices.

Technical Transparency: How It Works Under the Hood

For the IT Director, the beauty of PrivateDocsAI lies in its simplicity. It is a downloadable desktop application. It does not require a complex Kubernetes cluster or a $100k GPU server.

Conclusion: The Path Forward in 2026

Building a Zero-Trust AI strategy requires a fundamental shift in how we view "Enterprise Software." It is no longer enough for a tool to be powerful; it must be sovereign.

By prioritizing offline enterprise AI, law firms and data-sensitive businesses can finally reap the rewards of the generative AI revolution without the "cloud tax" on their security and compliance.

Next steps

Ready to test a truly private AI? Download the PrivateDocs AI desktop app today and start your free 7-day trial. Experience offline, local RAG on your own hardware - no credit card required, and your documents never leave your machine.