Beyond the Opt-Out: Why Enterprise Cloud AI Subscriptions Still Risk Data Leaks

PrivateDocsAI Team

For many law firms and financial institutions, the "Enterprise" badge on a cloud AI subscription feels like a bulletproof vest. The sales pitch is compelling: “Your data won't be used to train our models. We have an opt-out policy. Your data is encrypted.”

But for a CISO or a Senior Partner responsible for privileged client information, an "opt-out" is not the same as data sovereignty.

As long as your data is traveling over the public internet to a third-party server, the risk isn't just about model training—it’s about the fundamental architecture of the cloud. In this post, we’ll explore why enterprise cloud AI is often a "leaky bucket" and why a ChatGPT enterprise alternative for law firms must be local-first to be truly secure.

The Illusion of the Cloud Perimeter

The primary selling point of "Enterprise" ChatGPT and similar tools is the promise that your data remains private. However, from a technical and legal standpoint, "private" is a relative term.

When you use a cloud-based secure document AI, your most sensitive files—depositions, M&A contracts, or HR records—undergo a journey that looks like this:

- Transit: Your data moves from your local machine through your ISP to the provider’s data center.

- Third-Party Processing: The data is decrypted on a server you do not control so the LLM can "read" it.

- Persistence: Even if it isn't used for training, the data often sits in "temporary" logs or caches for debugging or safety monitoring.

Each step represents a point of failure. Whether it’s a sophisticated man-in-the-middle attack, a rogue employee at the AI company, or a subpoena served to the cloud provider, your data is out of your hands.

The "Zero-Trust" Fallacy in Cloud AI

In a true zero-trust environment, you assume that the network is compromised. You don't trust the vendor; you trust the math. Cloud AI requires you to trust the vendor’s policy. For law firms bound by strict confidentiality, relying on a "policy" rather than a "physical reality" is a gamble with client trust.

Why Law Firms Need a Local ChatGPT Enterprise Alternative

The legal industry is a prime target for data exfiltration. If you are summarizing massive, highly confidential documents, you cannot afford the "metadata leak." Even the fact that your firm is querying a specific set of documents about a specific merger can be a signal to bad actors.

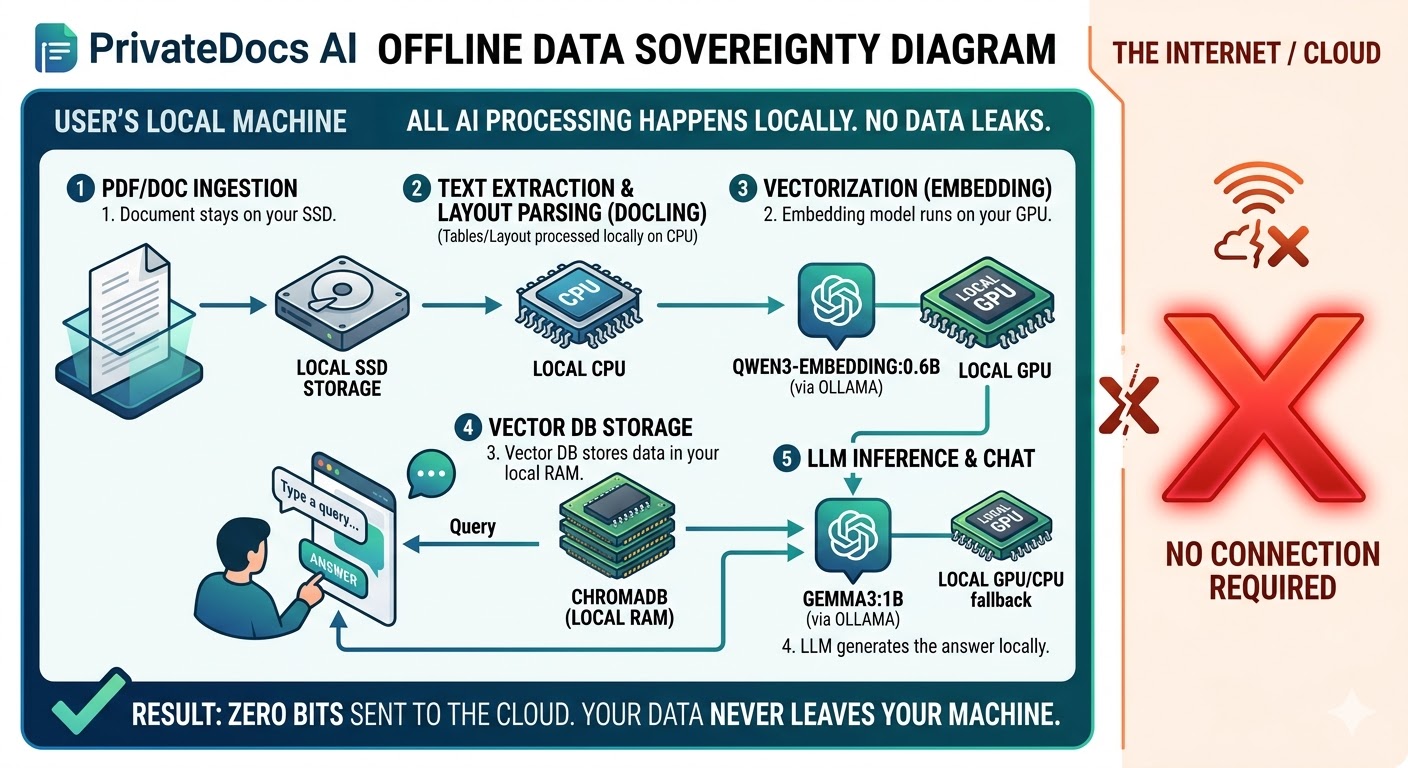

This is why we built PrivateDocsAI. It isn't just another "wrapper" for a cloud API. It is a completely offline enterprise AI that brings the model to your data, rather than the other way around.

The Private RAG Advantage

By using a Private RAG architecture, your firm ensures that the "brain" and the "library" exist on the same physical hardware.

Compliance: SOC2, HIPAA, and the GDPR Headache

If your firm handles healthcare data (HIPAA) or European citizen data (GDPR), the cloud AI "opt-out" might not even be legal.

The GDPR's "Right to be Forgotten" and strict rules on data transfers to non-EU entities (like US-based AI giants) make cloud AI a compliance nightmare. If an AI provider stores a "cache" of your document in a US data center, you may already be in violation.

PrivateDocsAI simplifies compliance by removing the "Third-Party Processor" from the equation entirely. Since the application is a downloadable desktop tool that runs 100% offline, your data never leaves your jurisdiction—or your room.

Performance: Can Local Models Match the Cloud?

A common concern for IT Directors is whether a local LLM for business can compete with the power of GPT-4. In 2026, the answer is a resounding "Yes" for document-specific tasks.

Small, highly optimized Micro-LLMs (like Qwen, Phi, and Llama) have been fine-tuned for high-fidelity extraction and summarization. In fact, for tasks like Smart Table Parsing or identifying specific clauses in a 500-page PDF, local models often outperform cloud models because they aren't bogged down by the "general knowledge" overhead of a 1-trillion parameter model.

Smart Table Parsing and OCR

Legal documents aren't just text; they are complex tables, handwritten notes, and scanned invoices. PrivateDocs AI uses advanced local OCR to ensure that even the most dense financial tables are ingested accurately without ever touching an external API.

The Hidden Costs of Cloud AI

Beyond security risks, enterprise cloud AI subscriptions are expensive. They often involve:

- Per-seat licensing: Paying $30-$60 per user, per month.

- API Usage Costs: Paying for every "token" processed.

- Infrastructure Overhead: Needing high-bandwidth, dedicated lines to handle massive document uploads.

PrivateDocsAI uses a local-only B2B license model. You pay for the software, but you use your own hardware. Whether you process 10 documents or 10,000, your cost remains the same.

Conclusion: Data Sovereignty is the Only Real Security

The "opt-out" is a feature of convenience for the provider, not a security protocol for the user. For organizations that handle sensitive intellectual property, the only way to ensure 100% security is to keep the data where it belongs: on your hardware.

If your firm is looking for a ChatGPT enterprise alternative for law firms that prioritizes risk mitigation and ROI, it’s time to move beyond the cloud.

Next steps

Ready to test a truly private AI? Download the PrivateDocs AI desktop app today and start your free 7-day trial. Experience offline, local RAG on your own hardware - no credit card required, and your documents never leave your machine.